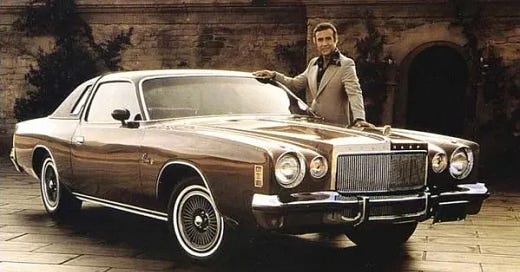

In 1975, Chrysler ran a now-famous advertisement on television for its Cordoba model luxury car. Titled, “Tradition,” the ad featured actor Ricardo Montalbáin (KHAANN!!!) reading off a fanciful description of the car, and using the phrase “fine Corinthian leather” to describe the seats and interior lining.

This was seven years before I was born, but from what I understand, the Cordoba sold surprisingly well, a hit for Chrysler in an otherwise down year, due to this successful ad campaign cooked up by a firm called Bozell, and the resonance of this phrase: “rich Corinthian leather” rolling off Montalbáin’s tongue in the commercial.

But the thing is—there’s no such thing as Corinthian leather.

Corinth is a real city in Greece, but they do not make leather for car seats. Corinth is better known as an Ancient city—one of the largest in the Classical era—a prosperous area where a lot of trade occurred. And it is, of course, also the name of two books in the New Testament, because Paul wrote four epistles to the church there which became part of the liturgy.

So it has an aura of prosperity, even of holiness. The adjective denotes class and luxury and tradition. It’s also beautifully vague—most people wouldn’t swear to being able to say where Corinth really was or what made Corinthian leather better than regular leather—a product of very rare quality, worth paying much more for.

In fact, the leather in the Cordoba came from a factory in Newark, NJ, just like all the other leather in all the other Chryslers out there. Worse, the Cordoba’s leather was not in *any* way different than the leather in the other Chrysler models—not softer, richer, anything. Just more expensive—because of an adjective. A very well-chosen adjective that denotes a certain kind of exclusivity and class and value.

Like all great advertisements, this one plays a little game of “The Emperor’s New Clothes” on us, allowing us to project our deep unconscious desires onto a blank canvas, and imagine something very ordinary is much, much more.

But what does any of this have to do with AI? Or Creative Writing in general, for that matter? Well, I need to back up a step for that, so I promise we’ll come back to that “rich Corinthian leather” very soon.

If you haven’t enjoyed the late panic yet, the current landscape is something like this—ChatGPT and other AI tools are emerging as a new force, not just in the tech world but lately in the creative world. For the past five years or so , AI technology has been part of our Echos and Dots and Siris and kind of helpful in doing things people don’t care much about, finding ways to make mundane tasks a little easier, automating dialing for reservations or putting on a musical playlist, etc. By promising to make our lives a little simpler, these products have become widespread, though after years of this, only 25-30% of Apple customers useSiri at all and even then only 1-3% use it routinely. (One theory is that people are not comfortable using it in public, and then there’s also the fact that it quite often misunderstands even basic commands, even after years of supposed “learning”.)

Just as I was skeptical five years ago that most people would really walk around chatting with their phones instead of typing into them, I remain skeptical today about the predictions the tech world has for the new disruptive ways AI will change everything forever… though I do fear a few specific ones that I think most of us are missing because we’re too distracted by the sci-fi vision of a HAL-9000 or Skynet taking over and killing us all.

Just as Bitcoin was supposed to destroy regular currency, just as eBooks would end “dead tree books” forever, just as 3D movies would displace the regular ones… we're promised that AI is a technology of such revolutionary power that it will upend almost anything you can think of in life forever. (Count me skeptical, as you may detect from my sarcastic tone… something fundamental, I'll note, that an AI can't do.)

Still, today, people who don’t care about or understand the purpose of art in any way, have now begun to try to increase profits by using AI to do things that human writers and artists used to do—design magazine covers or compose background music for TV shows or even write ad-copy (let’s see an AI come up with “rich Corinthian leather!)

It’s being used in these ways as we speak, in places where the human artistic work was largely invisible before. Most people never spent much time thinking about who wrote the instrumental music in an episode of Supernatural they’re binging, or who designed the image on the new issue of Cooking Light. If computers took over all of this work tomorrow, we’d likely barely notice.

AI is good at this filler work because it is made to take volumes of other sample work and intuit something “new” but similar that fits the same mold, and you can’t easily tell the difference. A corporate manager will easily jump at the chance to using the AI, which costs little to use, over paying a human being, as long as they think they can get the “same” work for less, and faster. That’s capitalism, baby.

This concerns me (it should concern all of us) because these are the kinds of jobs that many creative people do to pay the bills so that they can create more meaningful art. If we take away the ad-copy writing jobs, we’ll make it that much harder for an up-and-coming novelist to keep a roof over their heads while they write their debut… and that’s very bad. Still, when I talk to my students in these positions, they seem to find lots of other options: they deliver for Postmates instead, or doing part time landscaping, and all sorts of other interesting things. When I was in this phase of my career, I fixed printers and installed billing software at medical offices. Artistic life has always, to some degree, been about finding ways of scraping by, for better or worse. Still, I think it is worth it for unions and other worker’s advocate groups to fight these changes to the way this kind of “unseen” creative work gets done.

In any case, this is not what most people are worrying about, when it comes to AI. The new scary thing is that latest evolution of this technology has churned out apps and services that promote themselves as being able to “assist” us in doing not just the scut-work but in making the real artistic, meaningful work itself.

Instead of worrying about a banal Food Magazine cover that isn’t distinguishable from another Food Magazine cover, but making an AI-created one that is indistinguishable from one done by Annie Leibovitz. Because now I can ask Midjourney or some other product to show me an image of whatever I want, done in the “style of” Annie Leibovitz and it will plagiarize one for me in under a minute.

With only some simple inputs. DALL-E can generate visual art along these same lines. Beathoven or Soundraw can create a new musical composition based on a few parameters you set for it.

A text AI program like ChatGPT can answer questions, draft memos, create a travel itinerary, write legal briefs, or even scenes from a sitcom.

On the creative writing side, you have products like Writerly, Sudowrite, etc. claiming that they’ll “learn” your writing style and then write your novel for you, thus speeding up what is otherwise a long and agonizing process.

As a teacher, I’m concerned, of course about plagiarism, and about students using any or all of these tools to do their homework for them. Though so far I’ve not seen any of these services spit out anything I’d give more than a B- to (being generous), the fear is that in some coming evolution the programs become good enough to mimic A-quality work.

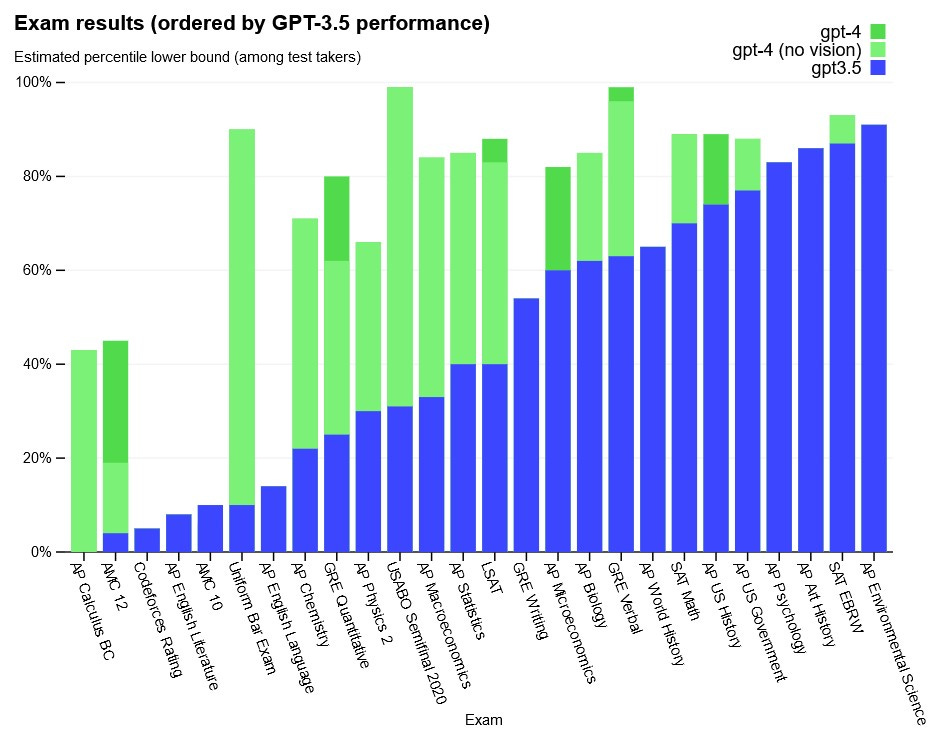

One viral image a shows that while the new ChatGPT-4 could effectively ace the LSAT exam, at the same time, scored very poorly on an AP Literature exam. This makes sense because it is good at making basic inferences to solve a logic puzzle, but has no way of understanding what Moby Dick actually means. All it can do is look at what other people have said it means, and cobble together some amalgam of their answers and present it as a sensible argument. But because the AP Lit exam is not testing logic but actual intuition and creative thinking, which ChatGPT can only pretend to have (so far anyway), it gets a 2 on the exam—not enough to get college credit.

I'll note that compared to the really breathtaking achievements in visual art and music, AI still doesn't seem to be particularly great at doing any of these text-based things. It also can't be trusted to provide true facts in a paper or an argument. It seems to just make up anything it wants, unbeknownst to you. So it can write you a paper for class full of perfect MLA citations, but you may then discover the hard way that it has fabricated all the sources. (This has happened with real legal briefs filed in court, in a particularly embarrassing way already.)

But let's skip over these issues and just look at how it writes generally.

I've asked Google’s BardAI to write a scene where two characters, Will and Kathy, meet at a farmer’s market and fall in love:

Will and Kathy met at the farmer's market on a Saturday morning. It was a beautiful day, and the sun was shining brightly. The air was filled with the smell of fresh produce and flowers.

Will was browsing the stalls, looking for some ripe tomatoes. He spotted Kathy standing at the next stall, looking at the flowers. She was beautiful, with long dark hair and big brown eyes. Will couldn't take his eyes off of her.

He finally worked up the courage to talk to her. "Hi," he said. "I'm Will."

"I'm Kathy," she said. "Nice to meet you."

They talked for a while, and Will learned that Kathy was a teacher. She loved working with kids, and she was passionate about education. Will was a writer, and he was working on his first novel. They talked about their dreams and their hopes for the future.

As they talked, Will felt a connection with Kathy that he had never felt with anyone else. She was intelligent, funny, and kind. He knew that he wanted to see her again.

They exchanged numbers and made plans to go out for coffee the following week. Will couldn't wait to see her again.

It’s awful writing. It isn’t even College Sophomore level writing. It’s largely unspecific, tells everything without showing anything, does not utilize any sensory detail, and can’t create anything like an inner life for either Will or Kathy, despite now having some “conflict” to play with. Basically, it just doesn’t know what it is doing at all.

Now, it could be possible to sit and work with these texts, asking the AI to rewrite various parts, adding dialogue or detail and deleting nonsense… but at some point this becomes just as hard if not harder than writing it yourself. It doesn't seem to be able to “learn” from corrections so it doesn’t make the same mistakes again, though this is what programs like Sudowrite claim to be doing now.

Soon, soon, they all say, it will all be seamless and perfect.

Any pointing out of the current shortcomings of AI is answered this way. The technology has come so far, so fast. Therefore it is only a matter of time before it gets so good at writing fiction that it will be indistinguishable from a skilled human writer. If AI can write a pop song or paint a picture like a human being, then why not write a novel like one?

The Large Language Models used to train the text based AI programs contain petabytes of writing for the AI to study, including lots of novels (which they're now being sued over). The AI “reads” all this material and then attempts to discern its patterns and foundational rules so it can recreate something new that still follows those rules. So it can now build sentences, paragraphs, even tell simple stories, by following the codes that it has learned based on what it was trained on.

I suppose you could say the same thing, minus a few petabytes, is being done by my college writing students. They've consumed some amount of literature and are trying to figure out how to make more that follows the same basic rules.

As I said, this gets you a B in my class, and I'd give the AI samples I've read less than that even.

What gets an A? Well, as I tell my sometimes frustrated students, imitation is a start, but it isn't enough. Not yet. To be truly creative you have to take what you've seen before and innovate. Experiment. What happens if you mix some Hemingway up with some Asimov? What if you take the story of your cat's death and retell it from the perspective of its squeak toy? Does something about that feel authentic to me as a reader? Do I get a sense that you've expressed something difficult, or complicated? That you've gotten excited because you wrote a really magical sentence? Or that your own character has done something you hadn't expected?

Creativity is about surprise, invention, and authentic expression of the creator’s own original thoughts. An AI has no original thoughts. It can't express anything authentic because it has nothing true inside to be authentic to. It will surprise us, randomly, but it won't know on its own that it has succeeded in doing so, because it has no way to know if one thing it spit out is better or worse than another.

But let's say it does evolve to the point where it can receive feedback and adapt to it. (This seems relatively simple so I do think they'll work this out.)

From there, it actually gets even harder. Because to really get to a level of quality in creative writing you also need to understand both why something works and how it has been done.

We can't just glean it passively but reading thousands of stories and interpreting their patterns. A story by Lauren Groff follows different rules than one by A.M. Holmes or Jorge Luis Borges or by George Saunders. One story will surely gleefully break rules that other stick to rigidly. A late Saunders story, even, feels totally different from an early one. It’s always, always, always changing.

But even restricted to some basics… for a story to work, we have to get inside of the minds of the characters and understand them as people. We have to sort out why they've made the choices they have, how their inner layers conflict with one another, how their emotional states and memories reign over their present mentality.

In other words we have to read empathetically, putting ourselves emotionally in the state the characters are in. We have to think, “What would I do in that situation?” and also “What makes this character do something else?” We have to process emotional information and counterfactuals at a rapid rate, sentence by sentence.

Human behavior is strange and nonintuitive and doesn't follow easily definable rules. That's what makes life interesting, and what makes stories interesting. Unless an AI can come to some comprehension of these whys and hows, I don't think it will ever pass Sophomore Creative Writing.

One article I read about these LLMs focused on the oddly high amount of genre fiction that seems to be on its reading list. The writer speculates that this is because it is the kind of writing that the techies choosing the readings also prefer.

He then wrote this:

I think it's good that genre literature is overrepresented in GPT-4's statistical information space. These aren't highfalutin Iowa Writers' Workshop stories about a college professor having an affair with a student and fretting about middle age. Genre — sci-fi, mystery, romance, horror — is, broadly speaking, more interesting, partially because these books have plots where things actually happen.

This so badly misunderstands the purpose of creative writing (including in genre for god’s sake!!!!) that it has further cured me of my AI worries.

Is a system built on this sad, flawed concept of storytelling going to be able to correct itself? (Also, sidebet that the writer of this piece at some point tried to get into an MFA program…)

In any case, a program like Sudowrite can infer where plot points belong, can follow the basics of how to create a character and describe them, show me a setting in a few sentences, even write dialogue between characters. Basically it can do all the nuts-and-bolts stuff I teach in Creative Writing 1. But from what I’ve seen, even being trained on someone’s “style” it can only randomly and accidentally stumble into anything like interesting writing. And even then a human being needs to be there to sift through the output and isolate that nugget. The AI cannot figure out that it has achieved anything like a “breakthrough” on its own.

Someone who thinks a great novel or short story is just a matter of adding some number of elements together in the right order, can indeed now mass-produce a piece of fiction, much as a robot in a factory can bake an Entenmann's cake. But that’s what it is, at the end of the day, and it may be delicious, but we won’t confuse it easily for a cake made by a human being with an ability to taste and judge what’s working and to improvise based on developing conditions, and certainly not to apply any level of creativity or originality to the process the way a real baker would.

But all this is really beside the point.

The promise, again, is that whatever an AI can't do now, it will very soon figure out how to do.

The case here is that eventually the AI will truly develop something like human sentience and be able to do more and more of these things that currently lay beyond its capabilities.

I remain pretty skeptical that this will ever happen, though I'm not an expert other than in having a sense of what in creative work requires the soul (however you define that) and feelings that an AI doesn't possess. But at the end of the day, I'm just not really scared about it. I think it isn't going to happen. Disagree if you want, that's fine.

Recently I read David Brooks’s column (I know, I know) in the NY Times about how he became more worried about AI after speaking with philosopher Douglas Hofstadter, who wrote Gödel, Escher, Bach and is a leading thinker on the nature of human intelligence. I was already, coincidentally, in the middle of Hofstadter’s latest book, I Am a Strange Loop, in which he probes these questions even further. I’ll admit that I’m only halfway done with that book, so maybe this will develop more… but he makes a compelling case through a bunch of mathematical games that our human ability to think self-referentially is not really special or unique and seems to be just an illusion—that our whole sense of ourselves as being “alive” or “sentient” or “unique” is some kind of internal system error.

In one section Hofstadter considers a robotic car named “Stanley” that successfully self-navigated a long journey in the desert, able to make tons of on-the-fly decisions about how to avoid obstacles. Stanley is very impressive, Hofstadter says, but it isn't “thinking” like a human being yet. It can't feel relief at having succeeded, or fear in being in a tricky situation. When it passed a competitor’s car, it can't wonder to itself, “Is that car just like me?” because it can't regard itself as a “me” or wish for companionship or feel a streak of competitiveness with a rival. It doesn't feel grateful for its success. It can't entertain a counterfactual and imagine how it would feel to have failed. This is what it means to think like a human being, and this is what is needed to create stories about human beings.

According to Brooks, though, Hofstadter is beginning to think that the AI does in fact have the ability to think in this way. At least it can mimic these thought patterns now, and answer questions as if it felt or thought. It can assess a situation it has been given and understand its “essence”. It still does not regard itself or others, or feel, but Hofstadter thinks it should be granted some level of consciousness, if lower down on the scale, like an insect or a dog or a baby (he spends a lot of time in his book talking about these categories).

Brooks remains skeptical, but less than before. He says he still thinks there is something in human consciousness that can't be replicated by computation, only mimicked.

But he remains worried about the techies who think that this isn't true, and who tell him, “It’s just neural nets all the way down. There’s nothing special in there. There’s nothing about you that can’t be surpassed.”

Basically, my response to that is this: if you really think this is true, then what’s the point of anything? Why compose a symphony? Why paint a picture? Why solve a theorem? Why write a book? Why invent an AI? Just because you can? Purely because you're curious? Or is it because you think there is some deeper understanding of life at the end of that act? Some meaning to be found in your search?

If we’re all just a bunch of electrons and other cosmic dust that, through some random accident, has come to regard itself as special… then yes, some kind of computer simulation can and will eventually achieve those conditions and become “alive.” But it would need to care, in some way, about being alive to compose better than Mozart or write better than Alice Munro. To do that it needs to have something resembling a mortality, a soul. It needs to feel that same yearning you or I feel for achieving understanding and meaning in a limited time frame.

You can take a beautiful cathedral and break it down into a million individual bricks and say, “See—just a bunch of rocks.”

But that doesn’t mean you can make a cathedral out of a bunch of rocks. You can’t do that unless you understand something much, much greater than the fundamentals of masonry. To an AI, I believe, the cathedral will always be just a bunch of rocks in a particular arrangement. Nothing more.

That’s where I’m at, anyway. Disagree if you want… that’s fine. But if we turn away from these ungrounded fears we can focus our energy on where AI really is a threat.

There are the “unseen” creative jobs as I mentioned. But this could get much bigger. The current WGA and SGA strikes are attempting to draw red lines over what studios can use AI to do—the demands of the AMPTP make it clear that they’d like to do all manner of horrible things, such as have AI write TV episodes that a human writer then “cleans up” for much less money than before, or scan in the image of an actor after one day’s work and then use them in perpetuity for no money, whenever they please. Using computer imagery, they can alter an actor’s performance or layer another skin over the character… it’s devious and evil, but as I’ll get to in a minute, something I also don’t think will work out well for these studios in the long run.

To jump back to Montalbáin’s “fine Corinthian leather” for a moment—the ad works because he sounds sophisticated and classy, and makes you think that you will also be sophisticated and classy if you drive the same car, with that fine Corinthian leather. It falls apart to some degree even if you think too hard and realize that Montalbáin is just getting paid to shill for Chrysler.

Even though the Corinthian leather is all total bullshit, the ad is selling it as a marker of quality and authenticity. You could turn to your buddy and say, “Want to feel the seat? That's real Corinthian leather!” It rarifies the leather, just as now we want to buy “designer jeans” more than regular jeans, or “organic strawberries” instead of regular strawberries, or “bespoke skin care” products more than regular skin care products. And over time as we catch on to the meaninglessness of these terms, they become less effective and must be replaced by new ones.

Now—if you see an ad for a new AI-written TV show, or an AI-written novel, or an AI-composed song… does that compel you to want to see it or read it or listen to it? Maybe at first, as a novelty. You want to see what it is capable of, and what it isn’t capable of. In this way the fear and hype around AI is part of what piques our interest in it. We want to play around with it and also to freak out about it so that we can be part of the ongoing conversation. But these conversations don't go on forever. We'll be pulled in some other direction of fascination, especially if AI does not keep making these huge leaps in ability, as I suspect it won't.

After a decade of dominance, recent Marvel and DC movies have flopped at the box office because audiences are growing weary of seeing CGI space battles and actors in green screen suits. The novelty of that is wearing off, and the studios are trying to cut corners and make the movies more cheaply by rushing the animation work… and it shows.

As of today, the biggest hits of the film world all year are two auteur-written and directed original stories, Barbie and Oppenheimer (one based on a 60-year-old line of toys and one based on a biography that took a decade to write).

They could not be more different on the surface, and yet moviegoers are flocking to see both, sometimes back-to-back, because both have something authentic-feeling about them.

Friends of mine are paying through the nose and waiting weeks to see Oppenheimer on an IMAX on 70mm FILM and the director Christopher Nolan has gone out of his way to emphasize that there are no CGI effects whatsoever in the movie. That’s a selling point, suddenly.

Instead of using CGI for Barbie’s dream house, they built a huge real set, in glaring pink, at 3/4 size. The sales pitches for both movies has been, ironically, that they are “real” and authentic, not computer animated and “fake.”

And these movies were, by the way, each less expensive to make than the latest Marvel box office dud.

We grow tired of whatever feels hollow. There’s something inherently authentic that we really crave.

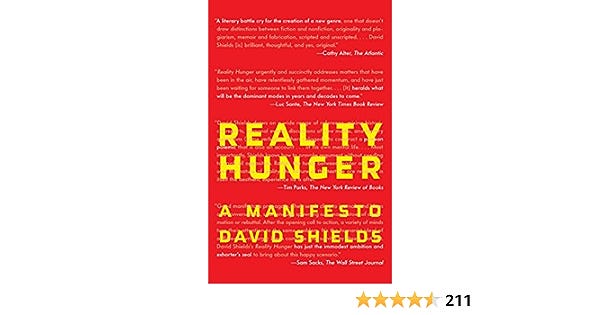

This, ironically, is the argument made by a book (I hate) called Reality Hunger by David Shields from 2010. In it, Shields argues that an innate human craving for authenticity means that they will ultimately reject the fakery of fiction and novels for nonfiction and memoir/essays. Of course what he missed back then is still true—a novel feels true to the person reading it. We’re happy to suspend our disbelief in something that has a truth at its core—even if it lies in order to share that truth.

Recently I was interviewing the author Garth Greenwell for my upcoming book, REVISIONARIES (out in Nov 2024!) and I asked him why he thought more authors weren’t interested in collaboration like visual artists or musicians or actors.

What I read for is the experience of being inside some individual’s consciousness. Collaboration has a different feel. I do like the privacy of one human mind engaging with another human mind. And something that is charged with aesthetic form and tradition and conversation, but is finally an experience of a self.

With AI, the word “artificial” is right there in the name. Unless it does reach a point where it cannot be distinguished from real intelligence, it will leave us wanting the real thing. For the foreseeable future we are going to be in an “uncanny valley” just as we were in the early days of CGI, where computer-generated humans were just unreal-looking enough that they freaked audiences out.

Eventually the CGI got better and better and we got to the other side of that valley. But as I said, ten or twenty years in, I think we’re beginning to get tired of it again. The problem with reality is that it doesn’t go away—it’s always there, always reminding us that bad art just doesn’t really match it. Great art, sharing real human selfhood, turns that around and makes us see reality itself in a clearer and deeper way.

Yes, lots of people will eat food full of artificial flavoring and will wear clothes made out of synthetic material and dance happily enough to songs churned out by an algorithm. But as long as some more “authentic” and non-artificial version of these things exists (and can be charged more for!) then many people will crave that over the artificial one, given the chance. Just as they’ll always want the “designer jeans” and the “organic strawberries” — they’ll want the author-written novel and the human-painted painting and the person-sung song more, maybe especially more because it has become more highly commodified by the presence of the fake stuff. Nobody wanted to hang out in the Metaverse, as Mark Zuckerberg just spent billions of dollars to figure out. The real world, for all its flaws, is still more compelling.

But what if companies begin to mass produce content with AI and sell it so cheap that people see it as an better, economical choice? Already I have friends unwilling to pay $30 for a new hardcover novel that took a novelist years to create, instead content to read a far worse eBook somebody churned out in three weeks, but which costs $1 on Amazon. They know it isn't good but they feel like they're getting their money's worth

That's my real long-term concern. That an AI TV show or book becomes the “fast food” of the coming era. That the market becomes flooded with junk content, as the movies have been with CGI over the past decade.

This is the AI-affected world I’m more concerned about… one where human art is something that most people just don’t get to experience. As it is, we have museums and libraries to help bring these things within the reach of those who desire, deeply, to see a real Monet or to read an actual book instead of a PDF. Just like anything else in our society, I think AI becomes eventually synonymous with “fake”. The “cheap and readymade” option for those who can’t afford to be as choosy, and the wealthy and the elite in society end up hording the “authentic” for themselves.

But I don’t want art to become a status symbol any more than it already is.

Remember “fine Corinthian leather”—people will usually pay more for something they believe to be more authentic, classier, fancier. And if they find out that “Corinthian” is just a con, they won’t want it anymore. By that same token, I think that if someone is reading a book, or a news article, and loving it, only to discover it was written by an AI, they’re going to feel cheated, not impressed. They’re going to leave the experience with a greater desire to find the real thing next time, and to not be fooled again.

Here's one final thought, and it's an example of exactly what ChatGPT can't and won't ever figure out how to do.

When I hear that phrase, “rich Corinthian leather” I don't really think about the ad, or even hear Montalban saying it. I had never actually watched it before sitting down to write this article. (Surprise!)

When I hear that phrase, I hear my high school friend Kevin saying it.

He drove me everywhere in my Senior year, when I was still only 16, in his busted Toyota Corolla. The ‘a’ was actually missing from the word ‘Toyota’ on the back bumper, so we called it the “Toyot”. Anyway, Kevin used to (sarcastically!) stroke the cheap fabric seating in the Toyot and say, “rich, Corinthian leather…” in an imitation of the ad, which I guess he had seen somewhere before. He was the one who told me Corinthian was meaningless, and that the leather was the same as the rest. This odd fact has just swirled around in my brain for 25 years, useless to me until this week. But I remembered it, for some reason, as I was thinking about AI. And it took me a while to work through why I was making that connection and what it meant.

Can an LLM do that? Connect a random phrase from a car commercial to a present day situation? Could it generate that memory? Come up with the “Toyot” on its own? And what if I add that Kevin had something bizarre hanging from his rearview mirror?

I asked BardAI and here's what it thought up.

A miniature rubber chicken; a googly-eyed ball; a piece of yarn with a dangling cat toy; a tiny stuffed animal; a peace sign; a “hang in there” sign; a mirror that reflects the driver’s face; a mini disco ball; a “honk if you love unicorns” sign.

Not bad. Not great. A few that don't really make sense at all. About par for the course. Not worth the time it took me to type in the prompt.

My imagination, or yours, could do better, faster. What if it was a shrunken head? What if it was a taxidermied squirrel? What if it was a string of Mardi Gras beads?

For me, the best answer is the one my memory provides in a split second: A green plastic pickle, full of pickle-flavored gum. He used to dare everyone to try some, and sometimes he’d pretend to perform unsightly acts upon it to make everyone laugh.

See that? That’s a living moment. You believed it. Words created an experience that felt authentic to you as you read it.

Until ChatGPT can come up with something that good, I'm going to get back to work.

An exquisite, necessary piece. Now to re-edit mine to include this...